Artificial intelligence in imaging analysis will become highly reliable by 2025. AI medical image analysis systems provide tumor detection accuracy of up to 96% and increase the effectiveness of breast cancer screening by 20%. Medical device software development services use AI to reduce diagnostic errors and speed up report generation by 45%. AI-assisted X-ray analysis results are comparable or even superior to those of radiologists, making AI a reliable tool in clinical workflows.

AI in Medical Image Analysis

As mentioned earlier, at the heart of medical image processing lies machine learning. With the ability to crunch through great amounts of data in a fraction of time, deep learning algorithms help physicians analyze and interpret medical imaging, catching even the slightest details.

Artificial Intelligence Imaging Analysis Include:

- Computed tomography (CT) scans

- Magnetic resonance imaging (MRI)

- X-ray imaging

- Positron emission tomography (PET) scans

- Ultrasound imaging

- Single-photon emission computerized tomography (SPECT) scans

- Radiographic imaging

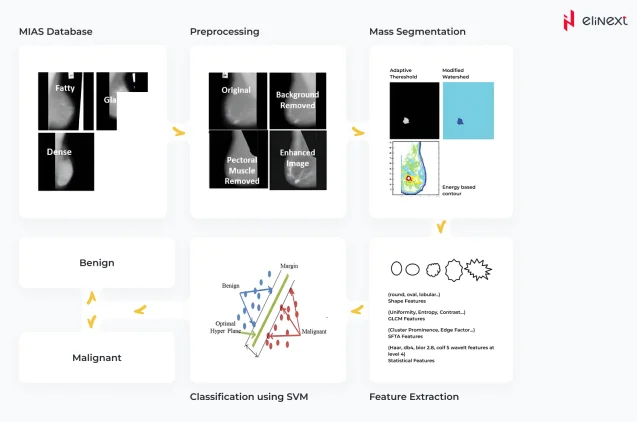

- As shown on the diagram below, core stages include preprocessing, segmentation, extraction, and classification.

Pre-processing

At this stage, the raw image is processed to enhance quality and ensure further computation process goes faster. Pre-processing activities include removal of noise and background artifacts, leveling or increasing the contrast.

Segmentation

One of the core tasks in medical image analysis, segmentation means partitioning the image into sets of pixels i.e., segments in order to locate objects and boundaries. These segments are the regions with similar properties like color, texture, brightness, and contrast. High variability within an image as well as multiple image modalities make this task a challenging one.

Feature extraction

After segmentation, regions of interest (ROI) are further analyzed for specific characteristics like gray levels, texture, patterns, distortions.

Classification

Finally, a classification algorithm is used to assess every ROI for being true positive, false positive, true negative, and false negative. If the detected structure reaches the threshold level, the system automatically flags it as abnormal.

Case in point

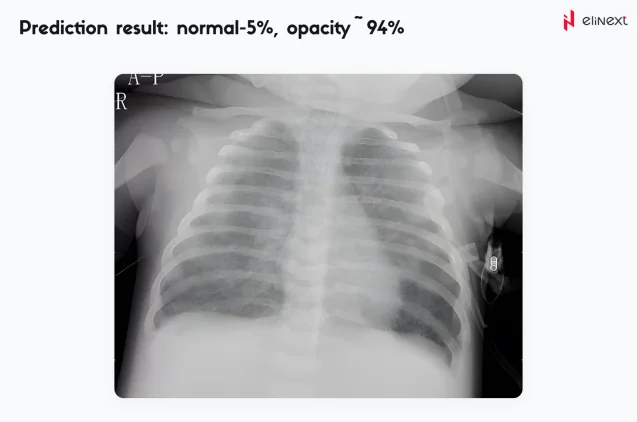

An automated pneumonia diagnosis tool, a part of a comprehensive telehealth platform, was designed to help identify signs of pneumonia using machine learning techniques. For that, the tool leverages the convolutional neural network Inception v3 developed by Google Research Team. The developed model was further trained using a curated data set of lung images.

The resulting algorithm uses binary identification — if it identifies 80% of lungs as unaffected, it will mark the lungs as healthy. For anything less than 80%, the model assumes the lungs might be affected and require expert medical attention. In addition to significantly reducing human errors, the solution can alleviate the burden of healthcare professionals.

An automated pneumonia diagnosis tool

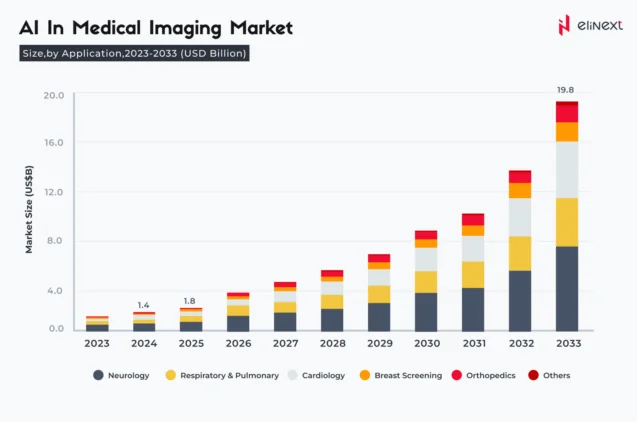

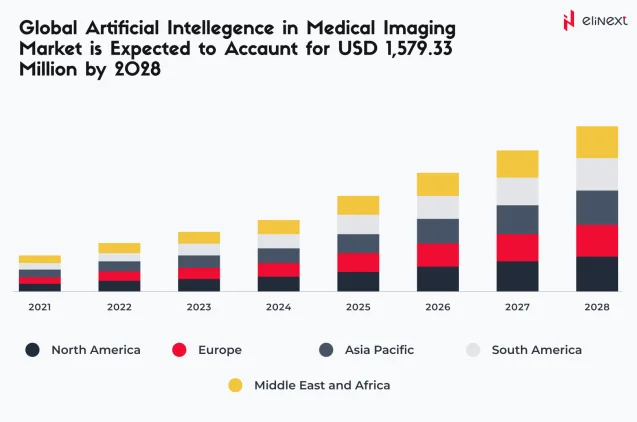

The advancements in deep learning have changed the dynamics of the medical image solutions adoption. By 2028, the global AI-powered medical imaging solutions market is expected to amass USD 1.5 billion.

From detecting breast and skin cancer to identifying cardiac pathologies, automated medical image analysis enables medical professionals to arrive at an accurate diagnosis faster, which significantly improves survival rates. With so much at stake, it’s only natural to wonder how reliable and accurate these algorithms are.

Is AI medical image analysis on par with healthcare professionals?

AI for medical image analysis, Artificial Intelligence development solutions are now equal to or superior to human experts in many metrics. In 2025, AI in medical image analysis will detect more breast cancer cases than radiologists who performed double readings.

Focus on AI for medical image analysis to improve diagnostic accuracy, reduce errors, and improve treatment outcomes.

Invest in AI-based medical image analysis solutions for a smarter and safer future.

What are the limitations of AI in medical image analysis?

AI for medical image analysis faces challenges such as data bias, limited generalizability, and decreased accuracy with low-quality images or rare conditions.

“Artificial intelligence in imaging analysis is revolutionizing diagnostics. Combining speed, accuracy, and consistency, AI enables physicians to detect diseases early and reduce errors. The future of healthcare depends on reliable and effective AI solutions for medical imaging.”

Elinext software development expert

What is the Future Outlook for AI in Medical Image Analysis?

Artificial intelligence in imaging analysis will become more widespread, improve accuracy, and integrate more deeply with clinical workflows by 2026, making diagnostics faster and more accurate. AI will enable early detection of diseases, automated image interpretation, and personalized treatment. AI-powered tools are already equal to or superior to radiologists in detecting cancer and rare diseases, leading to faster diagnosis and improved treatment outcomes. As algorithms evolve, their integration with clinical workflows and regulatory approval will facilitate even wider adoption, making AI an integral part of modern healthcare.

Conclusion

AI medical image analysis and healthcare software development services are transforming diagnostics. By 2025, AI will achieve 94–96% accuracy, reduce radiologists’ workload by 44%, and speed up report generation by 45%. AI-based breast cancer screening detects more cases without increasing the rate of false positives, demonstrating the reliability and value of AI in modern healthcare.

FAQ

What is AI medical image analysis?

AI in medical image analysis uses algorithms to interpret scans, detect abnormalities, and assist diagnosis. AI identifies tumors in CT or MRI images with high accuracy.

How accurate is AI compared to human radiologists?

Artificial intelligence imaging analysis matches or exceeds the capabilities of human radiologists, achieving accuracy of up to 96% in tumor detection and 94% in radiographic diagnostics.

Can AI reduce human error in medical imaging?

Artificial intelligence in imaging analysis reduces the likelihood of human error by providing consistent and effortless analysis and highlighting subtle findings that might otherwise be missed.

How reliable is AI in detecting early-stage diseases?

AI for medical image analysis is highly reliable: in clinical studies, it enables the detection of cancer and retinal diseases at early stages with an accuracy of over 94%.

Does AI improve efficiency in radiology departments?

AI in medical image analysis speeds up report generation by 45% and reduces radiologists’ workload by 44%, increasing efficiency and throughput.

Is AI helpful for personalized treatment planning?

Yes. Thanks to AI for medical image analysis, MRI/CT radiomics allows for response prediction and treatment adaptation. AI for breast MRI recommends neoadjuvant chemotherapy for HER2-positive tumors and identifies the risk of cardiotoxicity, helping to select a treatment regimen and more closely monitor the patient.

How does AI handle complex or ambiguous cases?

Artificial intelligence imaging analysis resolves ambiguities using uncertainty assessments, ensembles, and human-assisted triage. Example: a 6 mm borderline lung nodule is assigned low confidence; the AI schedules the patient for a radiologist examination and recommends a CT scan with a short interval.

Is AI in medical imaging regulated?

Yes. AI in medical image analysis is regulated as a device (FDA 510(k)/De Novo, EU MDR CE, UKCA). For example, an FDA-approved LVO stroke triage tool must demonstrate clinical effectiveness, maintain an audit trail, and undergo post-marketing surveillance.