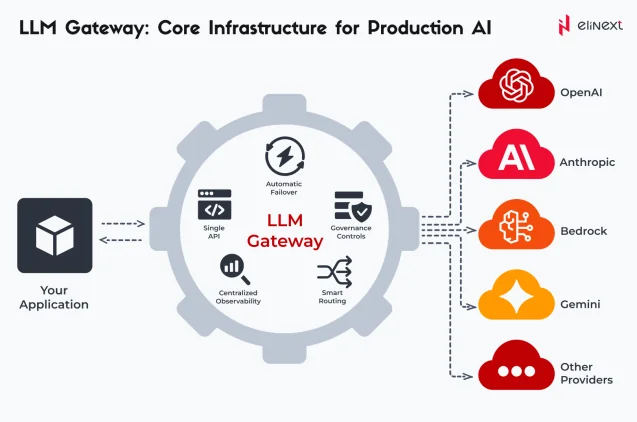

A large language model (LLM) gateway is a centralized interface unifying access to multiple models via a single API. It allows businesses to manage neural networks from one place, ensuring unified security and cost control. These gateways boost resilience through automatic failover and request caching to optimize budgets.

As companies actively move to full-scale AI infrastructure, LLM gateways have become mission-critical. This transition drives massive growth: the LLM gateway market reached $2.76B in 2026 and is projected to hit $7.21B by 2030.

What is the LLM Gateway?

An LLM gateway is an intelligent layer between your app and dozens of neural network models. It handles request routing and response caching to optimize budgets, as well as provide real-time performance monitoring. Modern LLM gateway architecture also enables seamless scaling and unified governance across all AI components.

Single API

It is a single interface for interacting with any neural network via a standardized request. It eliminates vendor lock-in and simplifies your architecture. As a result, development speed doubles, and migrating to a new model takes only minutes.

Automatic Failover

It’s a mechanism for instantaneous failover to a backup model or region if the primary provider fails. It ensures the continuity of critical business processes. AI service uptime reaches 99.99%, eliminating downtime and reputational risks.

Smart Routing

It is an AI gateway feature that directs requests to the best model based on cost, latency, or quality. It cuts expenses by using cheaper models for simple tasks and ensures 99.9% uptime via auto-failover, boosting overall ROI.

Centralized Observability

It is a unified dashboard for tracking all requests and token consumption in real time. Centralized Observability helps control resource usage across the entire team, ensuring full spending transparency and precise AI performance analytics

Governance Controls

It’s a comprehensive policy framework for access control, PII filtering, and regulatory compliance. It helps you adhere to corporate security standards and prevent data leaks. It ensures guaranteed data protection and eliminates budget overruns.

Ready to scale your AI infrastructure without unnecessary costs or risks? Explore our AI software development services to implement an LLM gateway today to gain full control over the security, budget, and performance of your neural networks.

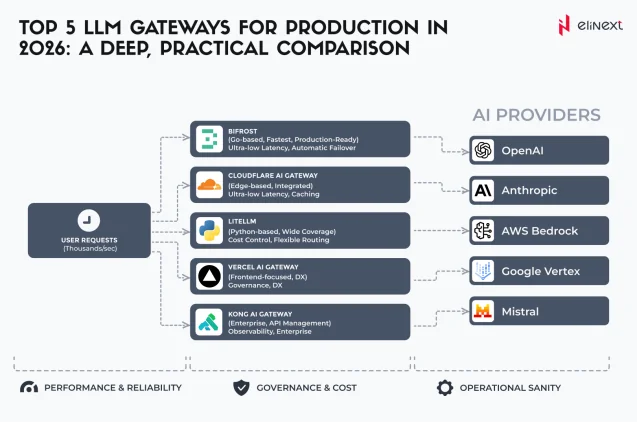

Top LLM Gateways for 2026: A Practical Comparison

When choosing an LLM gateway, look at the economic efficiency of your entire AI strategy. The market offers solutions for any task, ranging from lightweight open-source tools to powerful enterprise platforms. In this section, we break down the top five industry leaders that have become the gold standard due to their reliability and functionality.

Cloudflare AI Gateway

It is a global proxy layer built on Cloudflare’s edge infrastructure for managing and caching AI requests. It provides ultra-low latency and instant deployment for businesses already using Cloudflare’s ecosystem. The gateway allows businesses to significantly reduce API costs through edge caching and improve security against malicious prompts, making it ideal for high-traffic web applications.

Kong AI Gateway

It’s an extension of the world’s most popular API gateway, designed for large-scale enterprises. It unifies AI governance, allowing teams to apply consistent security, logging, and traffic policies across all LLMs. It is best for corporations with complex microservice architectures, resulting in standardized AI usage and centralized credential management without rebuilding existing infrastructure.

Bifrost

It’s a high-performance gateway built in Go, engineered for mission-critical apps requiring sub-millisecond overhead. It excels in Smart Routing and automatic failover, ensuring 99.99% uptime even during provider outages. Bifrost is perfect for developers of real-time AI agents and chatbots, delivering unmatched reliability and 50x faster performance compared to Python-based alternatives.

LiteLLM

It is the industry-standard open-source proxy that translates various provider schemas into a unified OpenAI-compatible format. It eliminates vendor lock-in and simplifies cost tracking for startups and mid-sized teams. Many developers use it to build custom LLM gateway applications, resulting in 2x faster development cycles and effortless migration between models like GPT-4 and Claude.

Vercel AI Gateway

It’s a specialized tool for frontend developers and modern web apps within the Vercel ecosystem. It offers seamless integration with the AI SDK, providing observability and caching for serverless environments. Vercel AI gateway is ideal for rapid prototyping and production-ready Next.js apps, resulting in streamlined deployments and a clear view of per-user token consumption in real time.

How to Choose the Right LLM Gateway?

In 2026, choosing an LLM gateway is a strategic capital management decision. Approximately 37% of enterprises now use 5+ models in production simultaneously, making gateways a requirement to avoid fragmented infrastructure.

Performance is the key differentiator: leaders like Bifrost deliver latencies as low as 11 μs, which is 50x faster than legacy tools. Prioritize semantic caching to slash token costs by 40% and ensure built-in compliance, as 45% of AI use remains outside IT oversight. Efficiency here directly defines your AI strategy’s ROI.

“Integrating dozens of LLMs creates security chaos and unpredictable API costs. At Elinext, we solve this by building custom gateways with advanced routing logic and protective guardrails under our LLM development services. This transforms fragmented AI infrastructure into a manageable asset, reducing operational costs by 30% and ensuring full compliance with corporate data processing standards.” – Aliaksei Druzik, expert in AI and ML Transformation

Explore LLM Development Solutions by Elinext

Elinext transforms fragmented neural network models into resilient business systems via our custom LLM gateway applications. Our solutions feature intelligent routing, semantic caching, and deep analytics to control your costs and security. Offering both machine learning development services and generative AI development services, we provide end-to-end support for enterprise AI modernization.

Future-proof your infrastructure with professional AI integration services! Contact Elinext experts to develop a scalable strategy for implementing LLM gateway applications into your business.

Conclusion

In 2026, an LLM gateway architecture is the foundation of business survival in the data economy. Centralizing model access allows companies to remain agile without sacrificing security or budget. A correctly chosen gateway transforms experimental AI into a stable, industrial-grade tool with predictable costs. As the market continues to expand, integrating these solutions is the fastest path to technological leadership and operational excellence.

LLM Gateways: Terms Explained

Multi-Provider Routing

Automatic redirection of requests between different providers (OpenAI, Anthropic, and others) to optimize cost, response speed, and generation quality in real time.

Failover / Fallbacks

A mechanism for instantaneous switching to a backup model in the event of a primary model failure. It guarantees uninterrupted AI service operation even during technical issues on the vendor’s side.

Semantic Caching

Storing responses based on their meaning rather than just exact text matches. It returns ready-made results for similar prompts, drastically reducing token costs and minimizing latency.

Observability / Telemetry

Collecting and analyzing detailed metrics (logs, response times, tokens, etc.) for all requests. It provides full transparency into AI infrastructure performance and simplifies system debugging.

Guardrails

Software filters that validate prompts and responses. They protect against data leaks, hallucinations, and toxic content, ensuring ethical, secure, and compliant LLM usage in production.

Unified API

A standardized interface for interacting with all types of language models. It eliminates the need to rewrite code when switching providers or updating model versions, ensuring seamless integration.

Rate Limiting

A tool for controlling API request frequency. It prevents system overloads, protects against abuse, and ensures resource consumption stays within established budget and technical limits.

Edge Deployment

Placing gateway capacity on nodes as close to the user as possible. This minimizes network latency and ensures near-instant responses for AI applications globally.

Provider-Agnostic

A vendor-neutral architectural approach. It allows for seamless switching between models to capture the best market terms and performance without incurring technical debt.

Token Budgeting

A system of quotas and token usage limits for different departments or projects. It enables precise cost forecasting and prevents unexpected budget overruns.

FAQ

What is an LLM gateway?

An LLM gateway is a centralized proxy layer that connects applications to multiple AI models via a single interface. It is used to manage traffic, security, and costs. Businesses apply it to simplify architecture and avoid vendor lock-in.

How do I choose the right LLM gateway?

The right choice is based on specific needs like latency requirements, security compliance, and support for your preferred models. They’re aimed to align AI infrastructure with business goals. Companies use them to ensure scalability and reliability.

Are LLM gateway expensive?

There are various pricing models, ranging from free open-source versions to enterprise-grade paid platforms. The cost primarily comprises architectural development, infrastructure overhead, and maintenance of security guardrails.

Can I use multiple gateways at the same time?

Yes, you can deploy multiple gateways for different regions or specific department needs. It prevents a single point of failure, improves global latency, and lets you leverage different providers’ best features simultaneously across regions.

Do gateways work with all LLMs?

Most gateways work with any LLM via a Unified API, translating various formats into a single standard. They support major providers like OpenAI and Anthropic, plus local models (Llama, Mistral) via tools like vLLM or Ollama.

How do LLM gateways improve cost efficiency?

They have built-in features like semantic caching and smart routing that minimize unnecessary token usage. LLM gateway architecture is used to prevent overspending on expensive models. Firms using them report 40% reductions in operational AI costs.

Will LLM gateway replace direct API calls?

Yes, LLM gateways are replacing direct API calls as the standard control plane for AI. Direct calls are now seen as technical debt, whereas gateways provide a unified proxy for seamless model swapping, automatic failover, and centralized security.